[MUSIC PLAYING] Hi. So I'm Julian Stephan. In today's session, I'll be chatting about Microsoft 365 DSC, and how to set up in tenant from scratch, and maintain configuration drift, along with some other scenarios that might be useful in your environment.

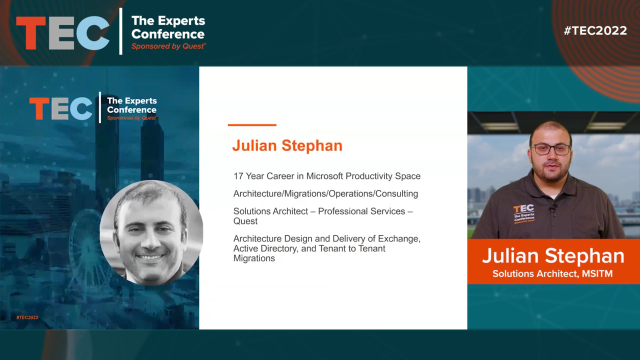

A little bit about me. I've got a 17-year career in the Microsoft productivity stack focused around architecture, migrations, operations, and the consulting space. Currently, I'm a solutions architect for professional services in the delivery space at Quest Software. I've been with Quest for about a year and a half now. Before that, I came over from the Quadratec acquisition where I was previously employed.

Currently, my role today is I'm responsible for architecture, design, and delivery of any exchange migration of flavor from on-premises to Office 365 or vice versa, Active Directory. Or tenant to tenant migrations.

So in today's agenda, we're going to talk about what is Microsoft 365 DSC. We'll talk about just some desired state configuration things in general just to look out for, the building blocks of how it exists today.

We'll go into how to build and deploy a Microsoft 365 desired state configuration and a future option of going with a configuration as code model with using DevOps, GitHub, things like that to maintain your DSC model.

So what is Microsoft 365 DSC? So DSC in general, if you've coded PowerShell before, typically what folks will do is you have a certain set of variables that you'll have filled with static information that you'll have in your code. You'll have a function that you'll reference those certain variables to to do something. And at the end of your script or somewhere in the middle you'll have some logging. And then you'll call that function to execute what you want to put in that function.

Desired state configuration in general is a little bit different. So it's written more in the declarative state of you want it to do something in a particular workload. So it's more apt for if you've written any C-sharp or Java, just the way you declare certain things and how you want it to do certain things. You'll see some of the variables within the resources are similar to that kind of model. So it's declaring what you want the actual script to do in certain chunks of order.

So instead of using resources, you would use configured instances to write your actual configuration. So your resources, which are your workloads in Microsoft 365 DSC are kept in the proper state. So within your resources which are referencing the Microsoft 365 workloads, they will contain the code, the PowerShell code within that workload that will put and keep a configuration that you want in place.

So they will reside in PowerShell modules that you would import onto your virtual machine or physical machine where you would keep this configuration hosted. So these modules would connect to-- are the PowerShell instances that you connect to today. So for any of the supported workloads like Exchange Online, SharePoint, Teams, Azure Active Directory, things like that.

The last part of the just DSC in general, there's a portion that's called Local Configuration Manager. So that's LCM for short. So what your Local Configuration Manager does is that it's an agent-- I would say it's an agent service that's built into PowerShell that's on your Windows Server 2016 or higher.

So it interacts between your resources-- so you're connecting-- interacts between connecting to your workload modules and what you want to set within those workload modules. So it acts as a certain polling interval that you can have on your VM where you set up your first configuration. So there are certain settings where you can set it to apply configuration.

Once it applies a configuration it can monitor for configuration drift and automatically apply configuration in place if something is out of state. You can apply and do a monitor where you apply your initial configuration and then you'll have a monitor that shows in the event logs if there's anything that's a difference. And it'll alert you there.

You can also add some code, just simple SMTP code. For sending out email you can use the standard PowerShell code that you would typically use to send out emails if you want to pull in those events to alert you just as initial first step of anything that would be different.

So what are the use cases for using Microsoft 365 DSC today? Your large time-saving your configuration once implemented is probably one of the major keys that you'll get by implementing this model. So you could apply this to a new tenant.

Or if you have a tenant that you're setting up from scratch, whether it's a dev test tenant that you want to mess around with for any configuration settings. Or I've seen it in a tenant to tenant migration scenario.

There's a couple of articles on the practical365.com website that go over certain use cases for DSC. One, particularly for tenant to tenant migrations written by myself. And also there's another use case written by Sean McAvinue who is one of the other Microsoft MVPs in the community. And he's got to take on how you would compare and contrast between two tenant configurations.

So you can take this and apply it to, like I said, a new tenant that's being stood up. You can apply it to an existing tenant configuration.

A thing to look out for in general if you've coded PowerShell in the past, PowerShell is a powerful language. When you script something it does exactly what you tell it to do.

So one thing to look out for if you're going to be going down this route with an existing configuration tenant that's in place, if you have a scenario of you're taking some settings from a source tenant, for example, and applying them to a dev test tenant, production tenant, target tenant,

28:20

28:20