This blog is a continuation of Adventures in Agent Creation - Part 1.

When last I wrote, I introduced an interesting exercise in high-performance counter monitoring for Windows systems using Foglight. I structured the problem and outlined my approach to the solution. Now I would like to take this deeper by defining my approach.

The Approach

For an in-depth and well written discussion on script agents in Foglight, please see Geoff Vona’s article. Now that we have a way of consuming a wide range of external data, we want an efficient mechanism to get that data.

The approach that I took has only one requirement; that the counters you want to collect are active on the system at the time you began the collection process. In designing the code, I chose not to assume anything. You could have created your own custom counter for example. Instead, the list that I read recursively presumes that the spelling of the counter is accurate in proper case. If this is not the case, then that collection will simply not be recorded.

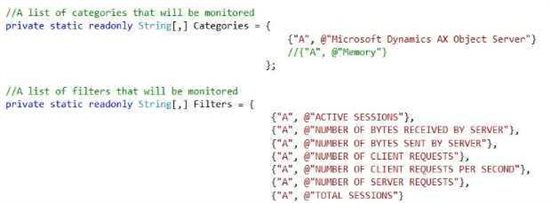

Figure 1 - Counter Definition

Note: the “@” directive tells the .NET compiler to consider the string as literal.

An important point: because we query system resources this agent must run with elevated privileges. The permissions granted to this agent must include the ability to read the registry, query system resources, and file operations.

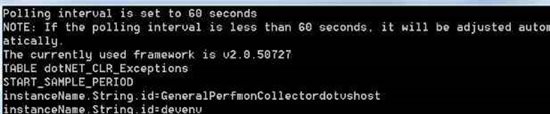

When setting up a script agent, Foglight reads a parameter (“sample_Freq”) from environment variables. This value is the sample interval that Foglight will use to run our agent until terminated, as written in seconds.

Internally, after parsing the arguments, a dataset object is created. The purpose for this dataset is to build an in-cache database that we will use to query system values. We avoid a great deal of recursion later by building a dataset first.

The benefit to this approach is that all of our categories and counters can be processed up front for state, correctness of value, and for existence on the system. We do not need to know any instance information ahead of time. The drawback is that any change in counters requires a restart of the agent process.

Eventually our dataset is populated with tables that have relationships between them and represents the categories, the instances, and the counters we want to collect. Lastly, another table is built that holds the values from each dynamic query of the systems counters. The contents of this table are returned with each iteration of collection.

Armed with a referential dataset of categories and counters, a loop is started that will use Microsoft LINQ expressions that query system parameters now held from the past iteration in cache using the list at an interval set by sample_Freq. As the LINQ calls are the only active operations in the loop, system loads remains quite small.

All that’s left to do is return the contents in a properly formatted message.

Figure 3

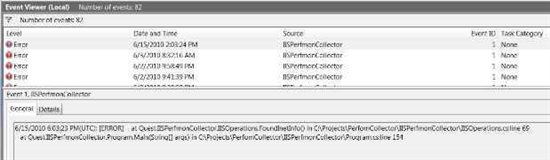

For diagnostic purposes another file is written, OutputCounters.xml which can be found in the install path. To further support triage, exceptions from the agent are written to the event logs of the target platform.

Figure 4

In my next segment I will introduce the code, and show how to integrate this new agent into Foglight. You can find part 3 here.